In Part 7, we used the "Easy Button." We pointed Vertex AI at a Google Drive folder and let Google’s infrastructure handle the heavy lifting of parsing, chunking, and indexing our enterprise data.

For 80% of internal applications, that is exactly what you should do. It is fast, managed, and highly scalable.

But we are engineers. We don't like black boxes.

What happens when your data doesn't fit neatly into a PDF? What if you are processing massive financial tables and need absolute control over how the text is split? What if you want to swap out the search algorithm entirely?

To build true enterprise intelligence, you have to know how the engine works.

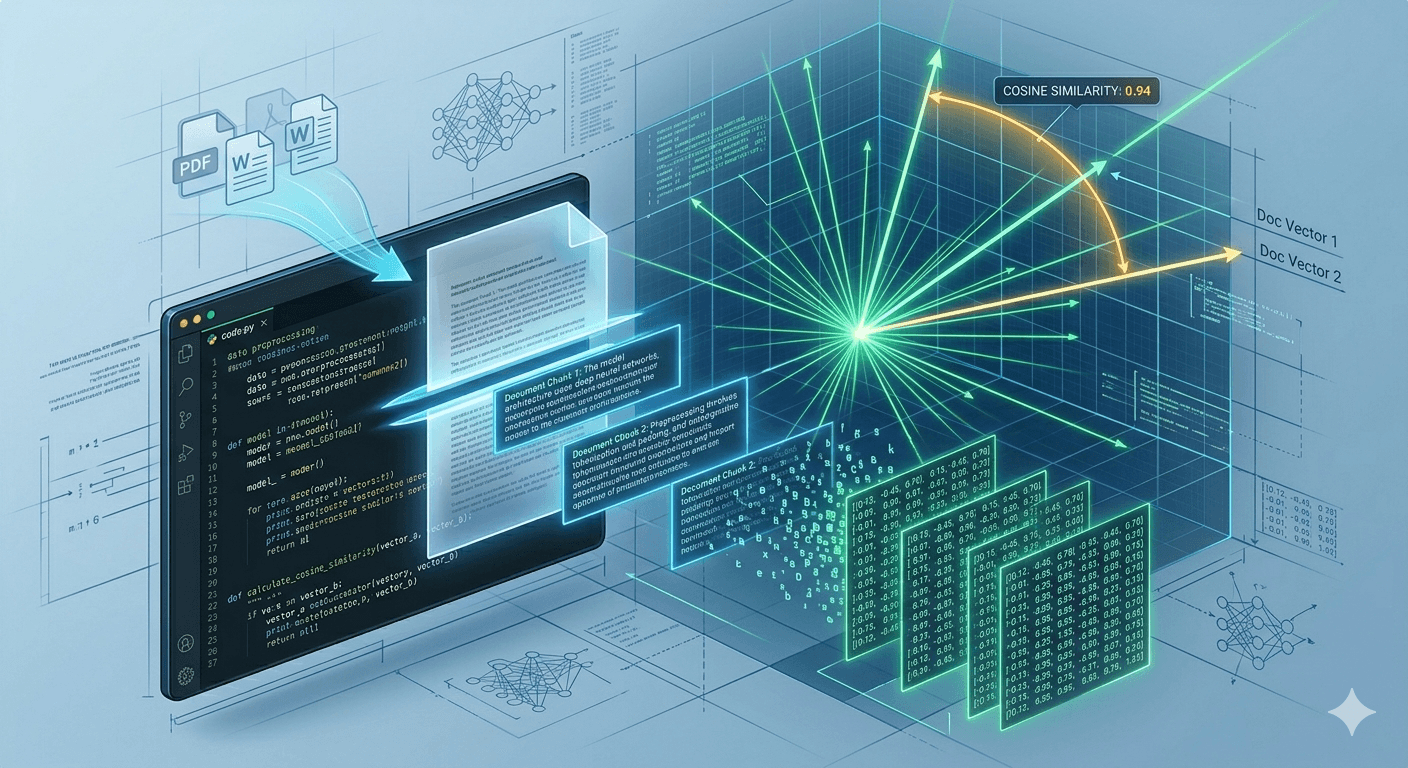

Today, we are taking the training wheels off. We are stepping out of our React frontend, opening up a Python environment, and building a Retrieval-Augmented Generation (RAG) pipeline from absolute scratch.

We are going to turn words into math, and use that math to find the truth.

Step 1: The Setup

First, let’s get our Python environment ready. You will need the Google Cloud AI Platform SDK and numpy for our vector math.

Open your terminal and run:

pip install google-cloud-aiplatform numpy

Now, create a new Python file (or open a Jupyter Notebook) and initialize the Vertex AI SDK. You must be authenticated with Google Cloud (via gcloud auth application-default login) for this to work locally.

import vertexai

from vertexai.language_models import TextEmbeddingModel

import numpy as np

# 1. Initialize the SDK

PROJECT_ID = "your-google-cloud-project-id"

LOCATION = "us-central1" # Or your preferred region

vertexai.init(project=PROJECT_ID, location=LOCATION)

print("✅ Vertex AI SDK Initialized.")

Step 2: Text Chunking (The Art of Context)

LLMs have a context window limit. You can't just shove a 1,000-page employee handbook into a prompt and ask a question. Even if you could, the model's accuracy drops when trying to pinpoint one specific fact in a sea of noise.

The solution is Chunking. We break our massive document into small, semantic pieces.

For this tutorial, let's simulate a company policy document. We will write a simple function to chunk it by paragraphs.

# Our simulated enterprise document

company_policy = """

PTO Policy: Employees receive 20 days of Paid Time Off per year. Up to 5 days can be carried over into the next calendar year.

Remote Work: Employees may work remotely up to 3 days per week. Tuesdays and Thursdays are mandatory in-office days.

Hardware: The company provides a MacBook Pro and a $500 stipend for home office equipment.

"""

# 2. Programmatically break the document into chunks

def chunk_text(text):

# Strip empty spaces and split by newlines to get logical paragraphs

raw_chunks = text.strip().split('\n')

# Filter out any empty strings

return [chunk.strip() for chunk in raw_chunks if chunk.strip()]

document_chunks = chunk_text(company_policy)

print(f"✅ Document split into {len(document_chunks)} chunks.")

# Output:

# Chunk 0: PTO Policy: Employees...

# Chunk 1: Remote Work: Employees...

# Chunk 2: Hardware: The company...

Architect's Note: In a production environment, you wouldn't just split by newlines. You would use a library like LangChain's RecursiveCharacterTextSplitter to ensure chunks overlap slightly, preventing sentences from being cut in half.

Step 3: Embeddings (Turning Words into Math)

This is the core of Data Science in the AI era.

An Embedding is an array of floating-point numbers (a vector) that represents the semantic meaning of a piece of text. If two sentences mean roughly the same thing, their vectors will be mathematically close to each other, even if they use completely different words.

We are going to use Google's cutting-edge text-embedding-004 model to convert our text chunks into vectors. This model outputs an array of 768 numbers for every piece of text you give it.

# 3. Load the Embedding Model

embedding_model = TextEmbeddingModel.from_pretrained("text-embedding-004")

# Function to get an embedding for a single string

def get_embedding(text):

embedding = embedding_model.get_embeddings([text])

return embedding[0].values

# Embed all our document chunks

chunk_embeddings = [get_embedding(chunk) for chunk in document_chunks]

print(f"✅ Generated {len(chunk_embeddings)} embeddings.")

print(f"Dimensions per embedding: {len(chunk_embeddings[0])}") # Will print 768

Our document is now fully indexed in memory.

Step 4: The Search (Cosine Similarity)

Now, a user asks a question: "How many days can I work from home?"

We need to find the chunk of our document that holds the answer. To do this, we:

Run the user's question through the exact same embedding model.

Compare the question's vector against all our document vectors.

Calculate the distance between them using a mathematical formula called Cosine Similarity.

Cosine Similarity measures the angle between two vectors. A score of 1.0 means they are perfectly identical. A score closer to 0 means they are unrelated.

Let's write the math using numpy.

def cosine_similarity(vec1, vec2):

# The dot product of the vectors divided by the product of their magnitudes

return np.dot(vec1, vec2) / (np.linalg.norm(vec1) * np.linalg.norm(vec2))

# The User's Query

user_query = "How many days can I work from home?"

# 1. Embed the query

query_embedding = get_embedding(user_query)

# 2. Calculate the similarity score for every chunk

similarities = []

for i, doc_embedding in enumerate(chunk_embeddings):

score = cosine_similarity(query_embedding, doc_embedding)

similarities.append((score, document_chunks[i]))

# 3. Sort the results to find the highest score (the closest match)

similarities.sort(key=lambda x: x[0], reverse=True)

best_score, best_chunk = similarities[0]

print(f"🔍 User Asked: '{user_query}'\n")

print(f"🏆 Best Match Found (Score: {best_score:.4f}):")

print(f"➡️ {best_chunk}")

If you run this code, the output will look like this:

🔍 User Asked: 'How many days can I work from home?'

🏆 Best Match Found (Score: 0.6842):

➡️ Remote Work: Employees may work remotely up to 3 days per week. Tuesdays and Thursdays are mandatory in-office days.

The Engine is Complete

Look at what you just built. Without using a massive vector database, you engineered a semantic search engine.

Notice that the user asked about "working from home," but the text says "work remotely." A traditional keyword search (like CTRL+F) would have failed. But because we used embeddings, the AI understood that the meaning was identical.

To complete the RAG pipeline, you simply take that best_chunk, inject it into a prompt alongside the user's question, and send it to Gemini to generate a polite, conversational answer.

You now understand the fundamental data structures powering every massive enterprise AI application.

Coming Up Next: The Architect's View

Now that you know how to build a RAG pipeline, we need to talk about scale.

Running embeddings and sending massive amounts of context to an LLM gets expensive quickly. In Part 9, we are putting our CTO hats back on. We will look at Cost, Latency, and the game-changing feature that will save your enterprise budget: Context Caching.