Welcome to Part 2.

Over the last six posts of this series, we went on a massive engineering journey. We built a multimodal AI agent, wrapped it in strict Zod schemas, secured it behind a Firebase Cloud Function, and deployed a blazing-fast React frontend to consume it. We built the ultimate full-stack AI engine.

But an engine without fuel won't get you very far.

In Part 2, we are shifting our focus from building the application to engineering the intelligence. We are tackling the enterprise scaling problems: Data ingestion, vector databases, programmatic safety guardrails, and automated evaluation.

And we are starting with the biggest problem in Generative AI: Hallucinations.

Public Truth vs. Private Context

Large Language Models are brilliant, but they are confident liars. If you ask an LLM about your company’s new remote work policy or the specific API limits of your internal microservices, it will either guess, hallucinate, or apologize for not knowing.

In Part 1 of this series, we solved a version of this problem using Vertex AI Agent Builder. We "grounded" our agent using Google Search, allowing it to fetch real-time, public data before answering.

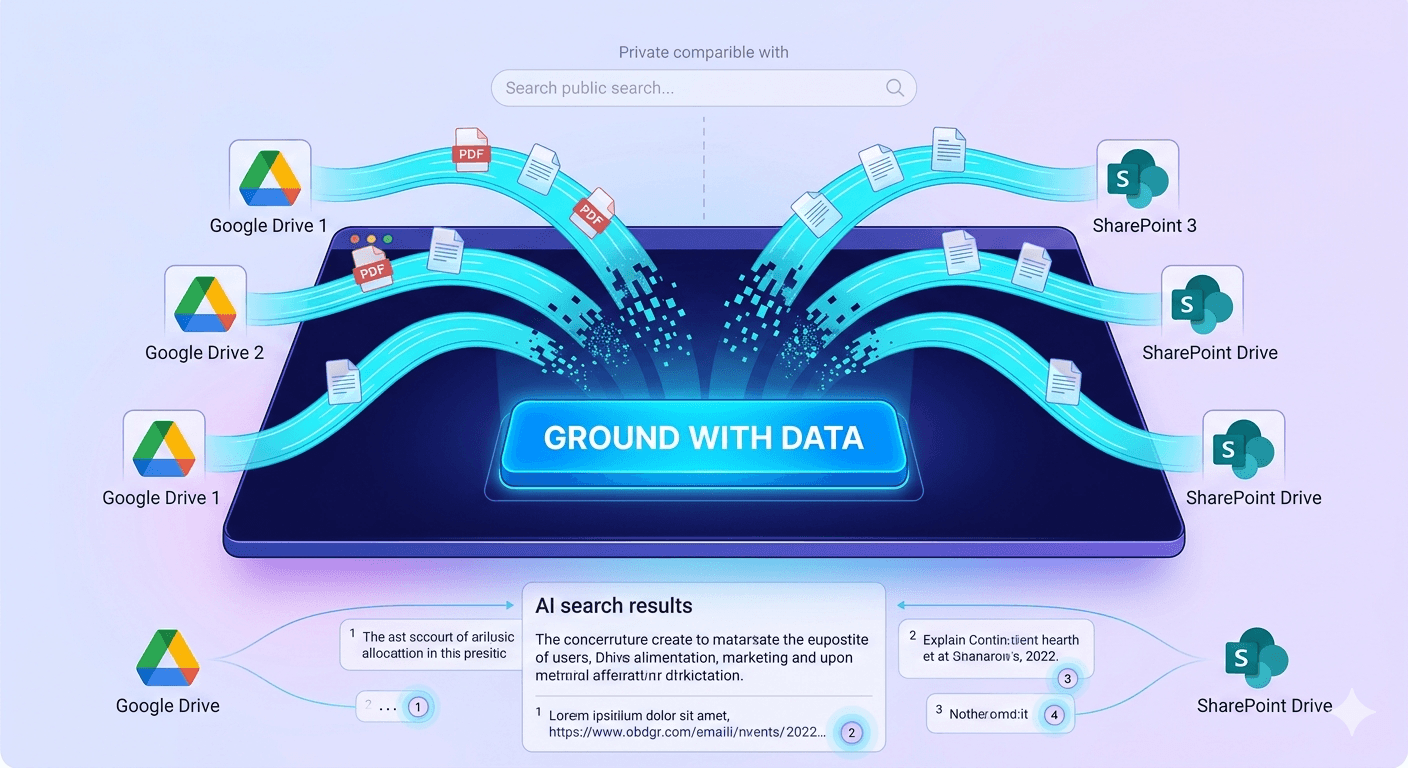

But enterprise value isn't built on public data. It’s built on proprietary data. It’s locked inside Google Drive folders, SharePoint sites, Confluence pages, and Jira tickets.

To make an AI useful inside a company, you have to inject your private data into the model's context window. In the industry, we call this RAG (Retrieval-Augmented Generation).

The Enterprise Dilemma

If you want to build a RAG pipeline from scratch, you are in for a serious engineering project. You have to:

Extract text from messy PDFs and Word docs.

"Chunk" that text into semantically meaningful paragraphs.

Pass those chunks through an embedding model to turn words into numbers (vectors).

Store those vectors in a specialized database (like Pinecone or pgvector).

Write search algorithms to find the right chunks when a user asks a question.

We will do exactly that in Part 8 using Python, because as senior engineers, we need to know how the engine works.

But today? Today we are using The Easy Button.

If your goal is to rapidly prototype an internal "HR Bot" or "IT Helpdesk Agent" to show your CTO by the end of the day, you don't need a custom Python pipeline. You just need Vertex AI Agent Builder.

The "Easy Button": Vertex AI Data Connectors

Google Cloud knows that parsing PDFs is miserable work. So, they built managed Data Stores directly into Vertex AI. You literally point the cloud at your Google Drive or SharePoint, and Google handles the parsing, chunking, embedding, and vector storage invisibly in the background.

Here is how you build a fully grounded enterprise agent in about 5 minutes.

Step 1: Create the Data Store

Head back over to the Vertex AI Agent Builder console. Instead of creating an Agent first, click on Data Stores in the left navigation menu, and click Create Data Store.

You will be presented with a beautiful list of enterprise connectors: Cloud Storage, BigQuery, Jira, Confluence, SharePoint, and Google Drive.

For this example, I selected Google Drive.

(Note: You will need to authenticate with a Google Workspace account that has read access to the folders you want to index).

Provide the URL to a specific Drive folder—say, the folder containing all your company's employee onboarding PDFs and benefits documents.

Step 2: The Managed Sync

Once you connect the folder, click Sync.

This is where you get to sit back and sip your coffee. Behind the scenes, Google's infrastructure is reading your PDFs, running OCR on images, extracting the text, embedding it, and spinning up a highly optimized vector search index.

What would take you three weeks to build custom is happening in about 30 seconds.

Step 3: Attach the Brain to the Agent

Now, go to the Agents tab and create a new agent (or open the one we built in Part 2).

In the agent's configuration panel, look for the Tools or Knowledge section. Click "Add Data Store" and select the Google Drive index you just finished building.

In your Agent's system instructions, add a simple directive: "You are an internal HR assistant. You must answer user questions based ONLY on the provided Data Store. If the answer is not in the Data Store, say 'I do not have access to that information.'"

The Payoff: Verifiable Truth

Open the test console on the right side of the screen and ask a highly specific question: "What is our company policy on carrying over PTO into the next calendar year?"

The agent won't guess. It will query your Google Drive index, retrieve the exact paragraph from your PDF, and generate a natural language response.

But here is the most critical feature for enterprise adoption: Citations.

Below the agent's answer, you will see a little numbered footnote. When you click it, Vertex AI provides a direct hyperlink to the exact Google Doc or PDF in your Drive that it used to generate the answer.

This is how you build trust with enterprise stakeholders. You aren't just giving them an AI; you are giving them a verifiable research assistant.

Coming Up Next: RAG from Scratch

Vertex AI Data Connectors are incredible for 80% of enterprise use cases. If your data lives in standard documents and you need a bot fast, this is the architecture you choose.

But what about the other 20%?

What if your data is highly complex? What if you need custom chunking strategies for financial tables? What if you are building an application outside of the Vertex UI and need to control the exact math behind the vector search?

In Part 2 Post 2, we are pulling back the curtain. We are opening up a Jupyter Notebook, spinning up the Python SDK, and building a custom RAG pipeline from absolute scratch. Get ready to write some code.