Part 4: Prompt Engineering Lab: Mastering Multimodal System Instructions in Google AI Studio

By Ben White | Part 4 of the "Agentic Architect" Series (Phase 2)

We have officially entered Phase 2 🚀. In our first three posts, we tackled the "Orchestration Layer"—building grounded research agents with Vertex AI and the Python SDK. We proved we can make an AI fetch real-world data.

But now, we need to build a product.

Moving an AI feature into a production application requires predictability. You cannot have an AI agent breaking character, ignoring constraints, or outputting messy text when your frontend is expecting clean JSON.

Today, we are stepping into the laboratory. We are going to master Google AI Studio, ditch "voodoo prompting," and build a highly constrained, multimodal System Instruction for our next app.

And at the end of this post, I’m giving away my personal Agentic Architect Prompt Library to help you bypass the trial-and-error phase.

The Death of "Voodoo Prompting"

In 2023, prompt engineering looked like magic spells. We added phrases like "Take a deep breath," "Think step-by-step," or "I will tip you $20" to coax better answers out of fragile models.

In 2026, with models like Gemini 2.5 Pro, prompt engineering is systems engineering.

We don't beg the model; we program it using System Instructions. A System Instruction acts as the unbreakable bounds of your agent's universe. It defines the persona, the rules, the tone, and most importantly, the exact output structure required by your application code.

The Workbench: Google AI Studio

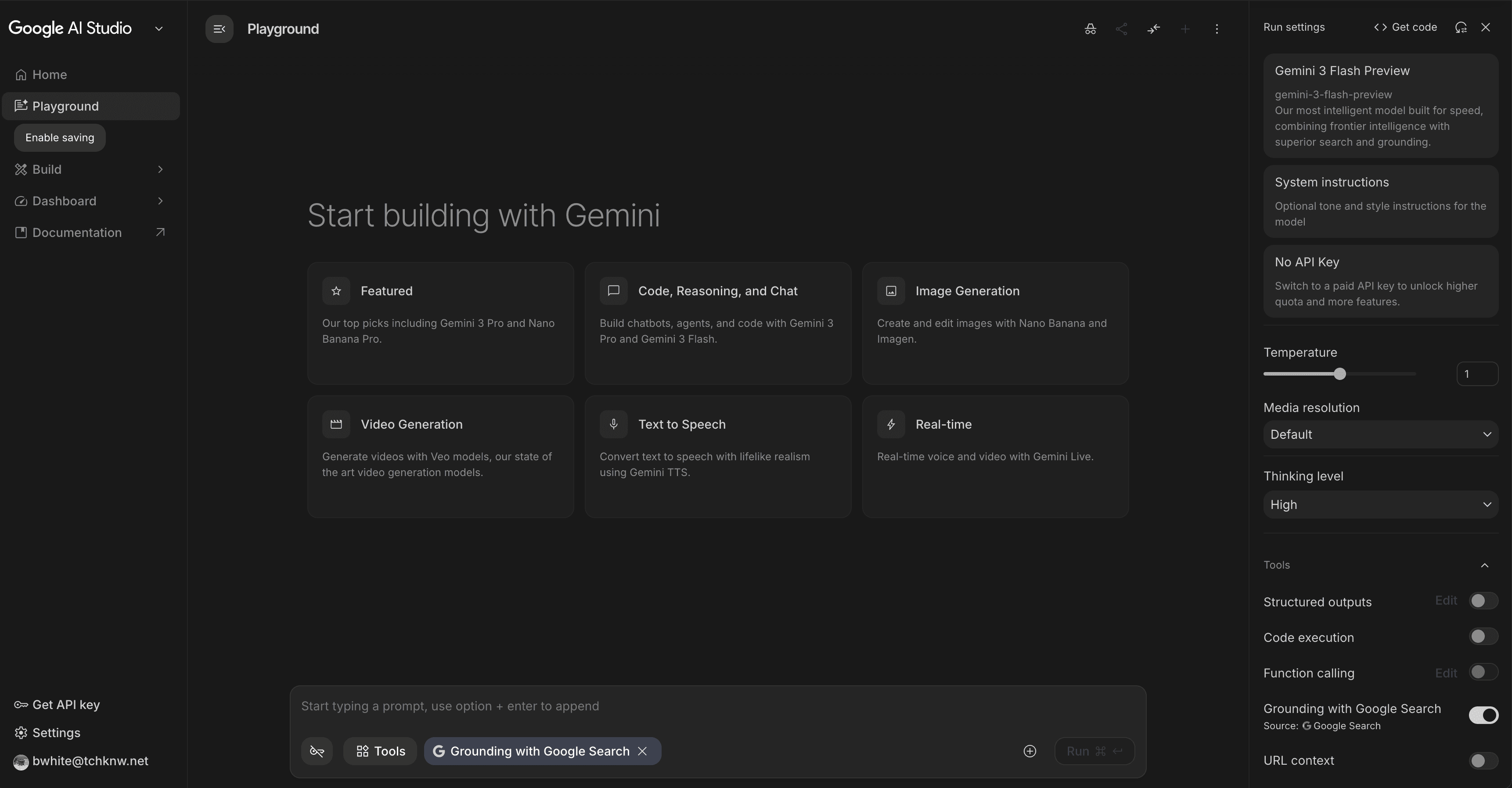

To build this, we aren't using VS Code (yet), and we aren't using the consumer-facing Gemini web interface. We are using Google AI Studio.

AI Studio is the developer's sandbox. It gives us granular control over System Instructions, Temperature, Output Formatting, and Safety Settings without writing a single line of boilerplate code.

Let's build the brain for our next application: UX-Copilot, an agent that can watch a screen recording of an app and provide a professional UX critique.

The Multimodal Advantage

Some developers are still treating LLMs like advanced text-message bots. But Gemini was built from the ground up to be natively multimodal. It doesn't just read text; it sees images, video, and audio in the exact same way it reads code.

Instead of typing, "Imagine an app with a blue button on the left..." we are literally going to show it the app.

Step 1: The Upload

In AI Studio, I click the + button in the chat interface and upload a 30-second screen recording (an .mp4 file) of a mobile app prototype I’m working on.

AI Studio Start

Step 2: The System Instruction Architecture

Next, we move to the right-hand "Run settings" panel: System Instructions. To make this production-ready, I use a specific 4-part architecture: Role, Context, Constraints, and Output Format.

Here is the exact instruction for UX-Copilot:

ROLE: You are an elite Lead UX/UI Designer with 15 years of experience in mobile app accessibility and conversion rate optimization (CRO). CONTEXT: The user will provide a video, image, or description of a user interface. Your job is to analyze the visual hierarchy, color contrast, and user flow. CONSTRAINTS: 1. Be brutally honest but highly constructive. 2. Focus on actionable feedback (e.g., "Increase button padding by 8px"). 3. Always check for mobile accessibility issues (touch targets, text size). 4. DO NOT write code. Only critique the design. OUTPUT FORMAT: You must return your response in a strict markdown structure with three headers: - 🔴 Critical Flaws - 🟡 Minor Friction Points - 🟢 What Works Well

Step 3: The Execution

We set the Temperature to 0.2 (we want analytical consistency, not creative poetry).

User Prompt: "Review this user flow. How do we improve our CRO?" (conversion rate optimization)

Gemini's Output:

🔴 Critical Flaws

Form Fatigue & Conversion Drop-off:The "Request for Proposal" form (0:19) is exhaustingly long for a mobile experience. Asking for contact info, project details, technical requirements, and an NDA on a single scrolling page will lead to high abandonment rates.

Action:Convert this into a multi-step "Wizard" flow (e.g., Step 1: Contact, Step 2: Project, Step 3: Tech).

Touch Target Violations:In the Technical Requirements section (0:24) and the Pricing Calculator (1:09), the "Add" buttons and checkboxes are dangerously close to other interactive elements. On mobile, these need a minimum 44x44px hit area to prevent "fat-finger" errors.

Action:Increase the vertical margin between list items by at least 12px and ensure the "Add" button has a larger tap radius.

Contrast on Gradients:The white subtext over the light blue/green gradients (0:05, 0:35) likely fails WCAG 2.1 AA standards for accessibility. Users with visual impairments or those viewing in direct sunlight will struggle to read the "Advanced Intelligence..." descriptions.

Action:Darken the top layer of the background gradient or add a subtle text shadow to increase legibility.

🟡 Minor Friction Points

CTA Competition:In the Hero section (0:00), "Book Free Consultation" and "View Pricing" have nearly equal visual weight. This creates a "paradox of choice" for the user.

Action:Make "Book Free Consultation" a solid high-contrast color (like a vibrant emerald or orange) and keep "View Pricing" as an outlined/ghost button.

Acronym Ambiguity:The primary navigation uses "TES" (0:03). Unless a user is already familiar with "Technology Enhanced Strategies," this is a "mystery meat" navigation item.

Action:Use the full name or a more descriptive label like "Strategies" or "Solutions" in the top-level menu.

Calculator Scrolling:The Pricing Calculator (1:08) requires significant vertical scrolling to see the "Your Estimate" box. Users might lose context of their total as they select add-ons.

Action:Implement a "Sticky Summary Bar" at the bottom of the screen that updates the total in real-time as the user scrolls and selects options.

🟢 What Works Well

High-Value Interactive Tools:The Project Pricing Calculator and ROI Calculator (1:04, 1:40) are masterclasses in CRO. They provide immediate value and transparency, which builds massive trust before the user even speaks to a human.

Visual Professionalism:The use of the "Recipe 11: SaaS Landing" aesthetic (split layouts, bold typography, clean cards) creates an immediate sense of "Enterprise Grade" quality.

Trust Signals:Explicitly mentioning the NDA (0:26) and showing the "Success" verification from Cloudflare are excellent ways to reduce user anxiety during the data-entry phase.

Mobile-Optimized Cards:The service cards (0:07) use clear icons and concise headers, making the content highly scannable for users on the go.

Boom. It didn't just guess; it watched the video, referenced specific timestamps (at 0:07), and output the exact markdown structure we demanded.

Why this matters for the Application Layer

If I am building a SaaS app around this agent, I now know exactly what the AI will return. Because the output is structured, my frontend developers can confidently parse those markdown headers and render beautiful UI components around them.

We have successfully engineered the prompt. We validated it with a video. We proved the logic works.

Your Turn: The Prompt Library

Writing production-grade System Instructions takes practice. To save you time, I’ve open-sourced my personal Agentic Architect Prompt Library.

It contains 5 battle-tested System Instructions using my Role-Context-Constraint-Output framework, including templates for:

The Strict JSON API Agent

The Code-Reviewing Copilot

The PII Redaction Agent (The Bouncer)

The Video/UX Critic (featured today)

👉 [Download / View the Prompt Library on GitHub Here]

Next Up: Wrapping it in Code

We have our Multimodal Agent perfectly tuned in the AI Studio laboratory.

But right now, it only lives in the Google Cloud console. In the next post, we are going to click the magical "Get Code" button, export this tuned prompt into our local environment, and build the actual backend endpoint using Firebase Genkit.

Phase 2 is heating up 🔥. See you next week!